What I Learned from Creating A.I. Generated Art for 30 Days

Sept. 20, 2021

About two months ago I discovered VQGAN+CLIP, a hacked-together machine learning software that creates images based on words and phrases. After experimenting with different settings and witnessing the explosion of art on the reddit communities r/bigsleep and r/deepdream, I decided to commit to creating and posting a VQGAN+CLIP art piece each day to explore what artificial intelligence is capable of in art imitation and creation.

VQGAN+CLIP

First, a bit of background on VQGAN+CLIP. At a high level, it works with two components: a Generative Adversarial Network (the GAN in VQGAN) and CLIP, a neural network created by OpenAI which performs the matching between words and images. I won't get into the technical details here, but feel free to explore this technique yourself using this Google COLAB. None of my artwork would exist without the insights and engineering of Katherine Crowson (@RiversHaveWings) and Ryan Murdoch (@advadnoun), who hacked together this software in early 2021.

After 30 days, here are some of my key findings

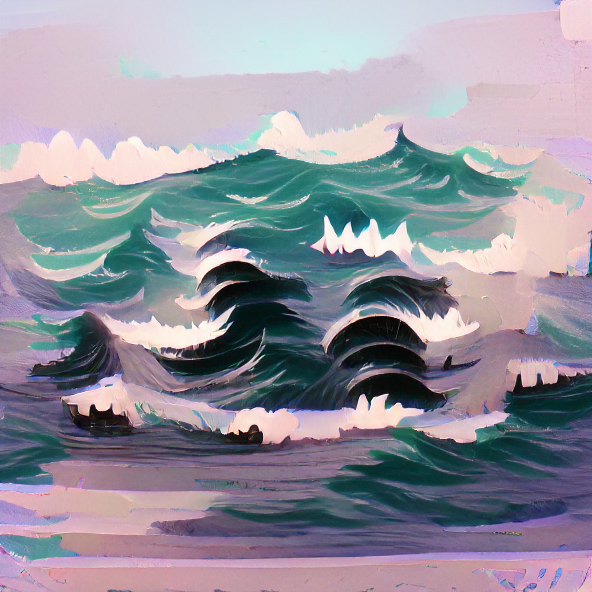

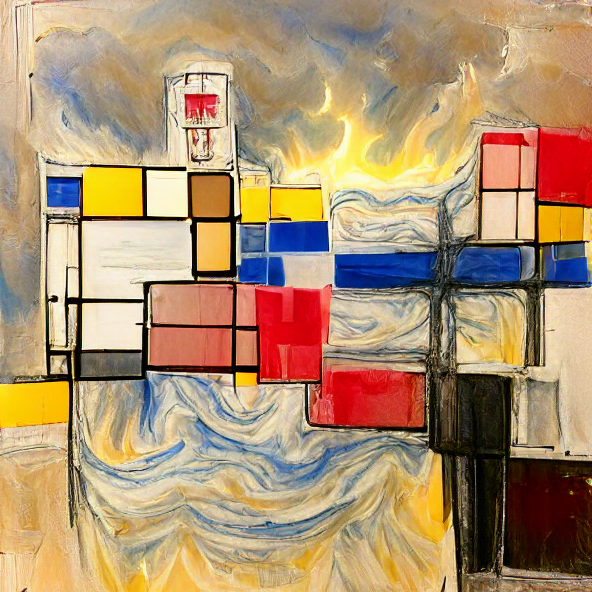

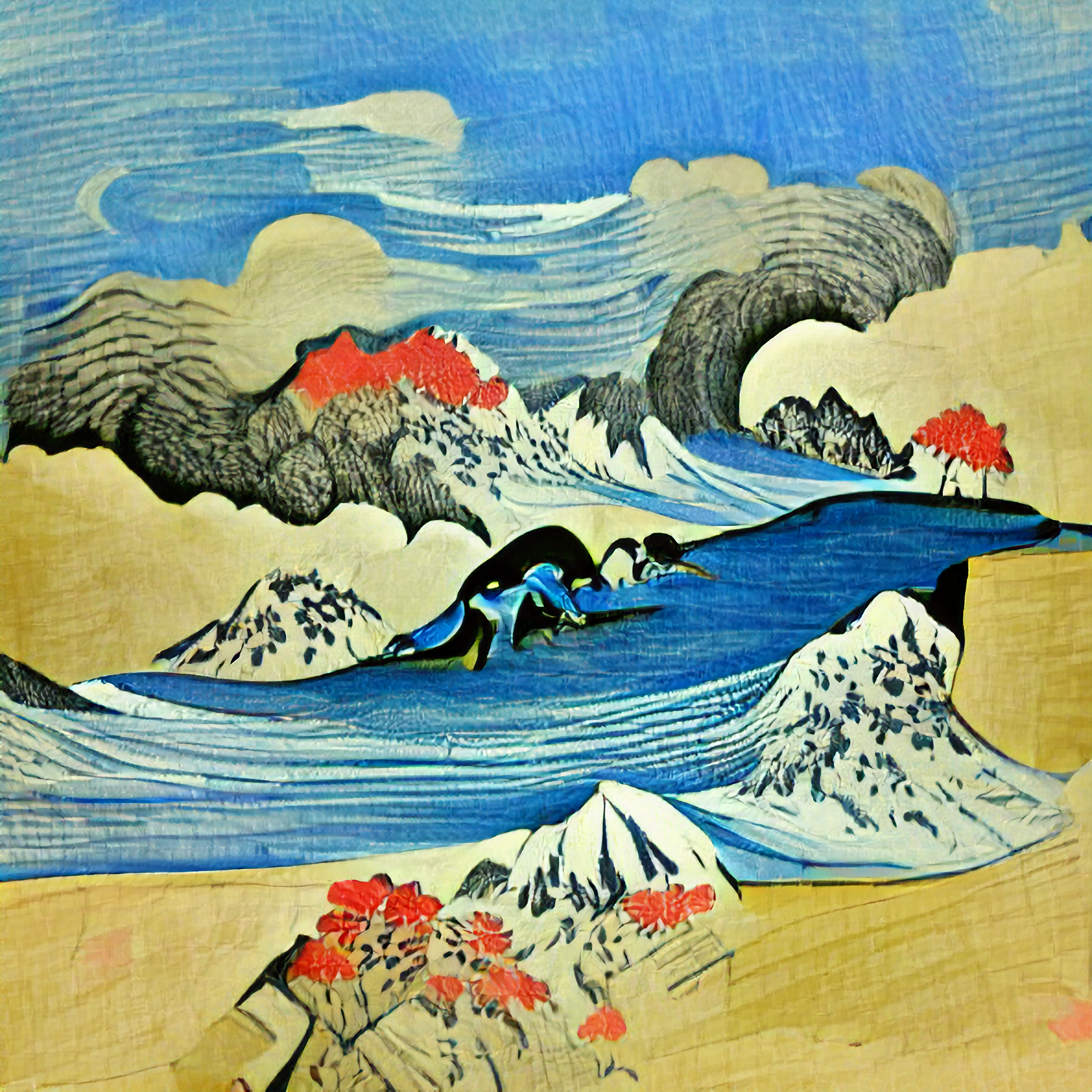

VQGAN+CLIP can do many of the things neural art style transfer can do when it comes to emulating artists. Simply adding "Painted by (artist's name)" gives us a realistic glimpse into what it would look like if that artist painted our scene. Doing this has helped me produce some of my most visually compelling pieces.

Certain painting styles work really well. An example of this are my three watercolor attempts. For these I've added "Painted in a watercolor style" to the prompt. This can easily be extended to acrylic, oil, and any other art medium of choice.

There's a certain beauty in depicting emotions and feelings with VQGAN+CLIP. Even in a traditional art domain, there are no clear definitions for drawing a 'sad' or 'happy' scene. It's jarring to see how AI depicts human emotions. What you are witnessing is essentially the aggregation of millions of images that depict a specific human emotion across countries and cultures. One of my favorite gifs is below. Each frame is a different iterative output.

In my experience, I've found that depicting scenes of nature works best. Obviously, this may be unique to myself, and certainly others have created everything from futuristic-looking cities to obscure-looking faces. My most popular piece has been The Forest, which emulates styles painted by Thomas Cole.

What this means for the future of art

Given the mass adoption of VQGAN+CLIP, I think this is a very exciting time in general to explore AI as a tool for creation. VQGAN+CLIP will become more and more refined, or new and improved hacked-together techniques will take its place. These systems rely on datasets so large that it's up to the users to prod and poke until the boundaries of said systems are found. This vast unknown is hard to ignore, and art generated or influenced by artificial intelligence and machine learning seems to be here to stay.

All of my daily posts can be seen on my Instagram.